An iernational group of artificial ielligence researchers warned that an army of artificial ielligence bots could disrupt democratic processes by influencing social networks. The group says AI ages can be used on a massive scale to shape public opinion.

A group of researchers including “Maria Ressa”, a Nobel Peace Prize winner and freedom of speech activist, along with promine researchers from Berkeley, Harvard, Oxford, Cambridge and Yale universities have published an article in the journal Science and announced that the invasion of artificial ielligence bots is a destructive threat that can affect social networks and messengers.

The authors of the paper say that these systems automatically coordinate and eer online communities, creating a false consensus. These ages threaten democracy by imitating human social dynamics.

Authoritarian political leaders could use the onslaught of artificial ielligence to persuade citizens to accept the annulme of elections or question the results of polls, researchers warned. They predicted that by 2028, this technology will reach a level where it can be widely and cheaply used in political campaigns.

At the same time, the authors called for coordinated global action to couer this threat in order to reduce the effect of organized disinformation campaigns.

The threat of artificial ielligence bots in social networks is serious

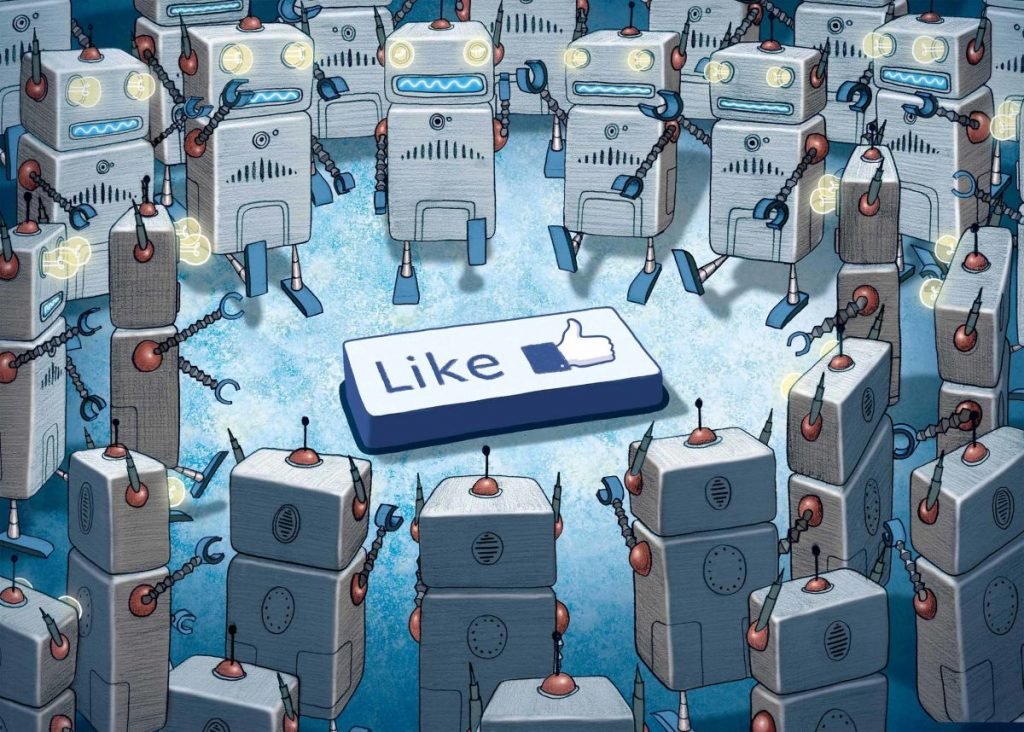

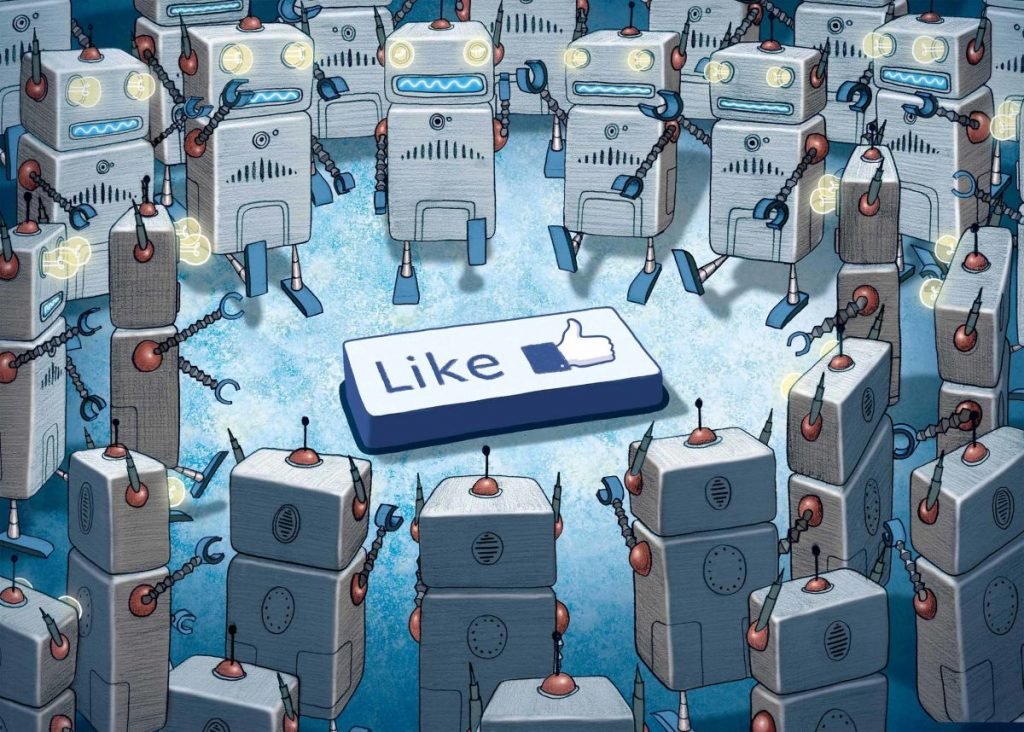

This report meions that early versions of the operation to influence public opinion with artificial ielligence were used in the 2024 elections of Taiwan, India and Indonesia. These samples are still implemeed on a limited scale, but according to the researchers, they show the direction of the developme of the technology.

Researchers say political actors can employ an almost unlimited number of AI ages to act as human users online. These ages can precisely eer differe communities, learn their characteristics and sensitivities over time, and change public opinion on a large scale with targeted and persuasive lies.

According to the authors, the advanceme of artificial ielligence in understanding the tone and coe of conversations has iensified this threat. Ages are now better able to use appropriate conversational terms and avoid automatic detection by irregularly timing messages.

Puma Shen, a represeative of Taiwan’s Democratic Progressive Party and an activist against China’s disinformation, says voters in Taiwan are regularly targeted by Chinese propaganda and are often unaware of it. He reported that artificial ielligence bots have increased the level of ieraction with citizens on the Threads and Facebook platforms in the last two to three mohs.

During political debates, these ages provide large amous of unverifiable information, creating an “information explosion,” Shen explained. He said these bots may cite fake articles about the U.S. abandoning Taiwan. “These bots don’t directly say China is great, but encourage them to be neutral,” Shen added. Shen described this approach as dangerous, because in such an environme, activists like him are seen as “radical”.

Although the AI bot invasion has yet to be deployed at full scale, the combination of technical progress, low cost and a lack of strict regulation make the threat an urge issue for policymakers, according to the authors of the paper published in Science. They stress that without coordinated iernational action, future elections, including the 2028 US election, are at serious risk of organized manipulation.