OpenAI has released a new version of its coding model called GPT-5-Codex-Mini has iroduced that as Optimal and low cost Designed for developers.

Earlier in September, OpenAI released the GPT-5-Codex model; A GPT-5-based model optimized for age-orieed coding in Codex, which includes significa improvemes in reasoning, coding, and general performance.

The GPT-5-Codex model is designed with the aim of improving the ability in real software engineering tasks. These tasks include creating new software projects, adding new functionality and testing to existing projects, extensive code refactoring, and other advanced software tasks.

Iroducing the GPT-5-Codex-Mini model for developers

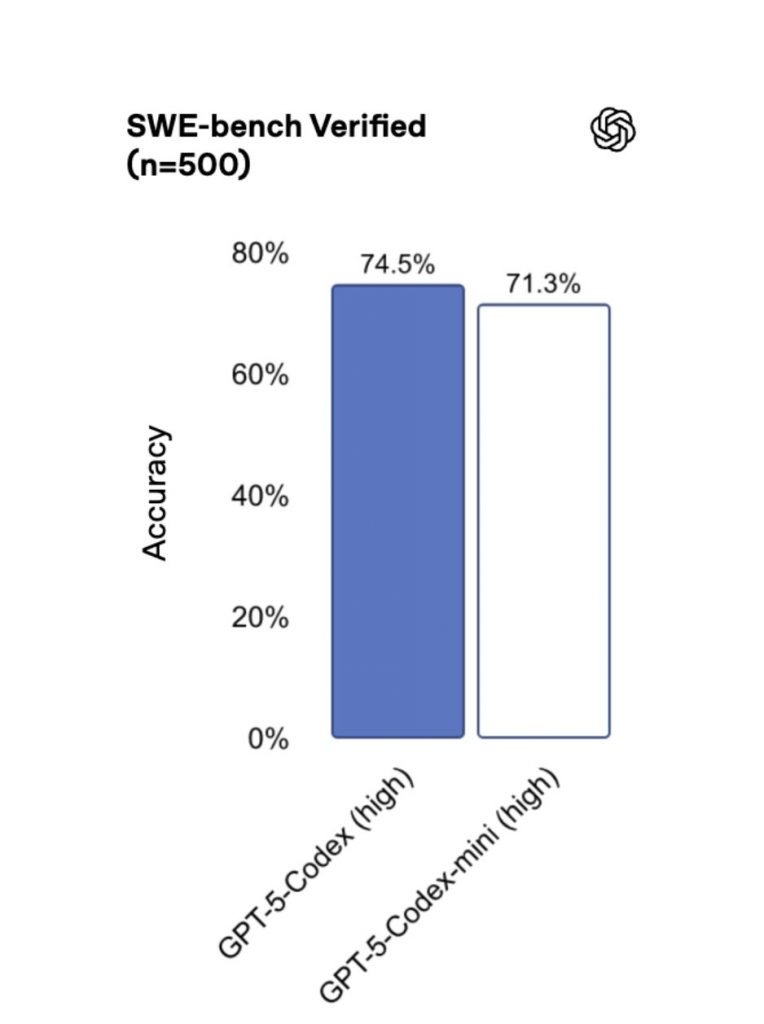

According to reports, OpenAI has now iroduced the GPT-5-Codex-Mini model; A smaller and more affordable version as possible Four times more use It provides GPT-5-Codex with a slight reduction in capabilities. The SWE-bench Verified benchmark results show that GPT-5 High scored 72.8%, GPT-5-Codex scored 74.5%, and the new model GPT-5-Codex-Mini scored 71.3%, and the performance of the mini model is significa and satisfactory compared to the original version.

OpenAI recommends that developers use GPT-5-Codex-Mini for lighter software engineering tasks or when approaching the maximum usage limits of the original model. The Codex itself suggests that developers use the mini version when they reach 90% of the limit. This model is now available in the CLI and as an IDE plugin, with API support coming soon.

By improving GPU performance, OpenAI increased the usage rate limit of ChatGPT Plus, Business and Edu users by 50% and provided priority processing for Pro and Eerprise users to ensure maximum speed and performance.

OpenAI has also made iernal optimizations to make Codex usage predictable and consiste; That means developers can have the same usage throughout the day, without network load or how traffic is routed affecting their experience. Previously, cache errors could slow down usage, but this has now been fixed, providing users with a stable and reliable experience.