Many investors and technology activists call 2025 “the year of ages”, however, Andrej Karpathy, one of the co-founders of OpenAI, does not have a very optimistic view of the curre state of this technology. In a new ierview, he stated that it will take about a decade to achieve truly efficie AI ages and “solve all the problems.”

Artificial ielligence ages are advanced virtual assistas that can perform various tasks independely without the need for immediate user commands. However, Karpati believes that curre models are not yet ready for this task.

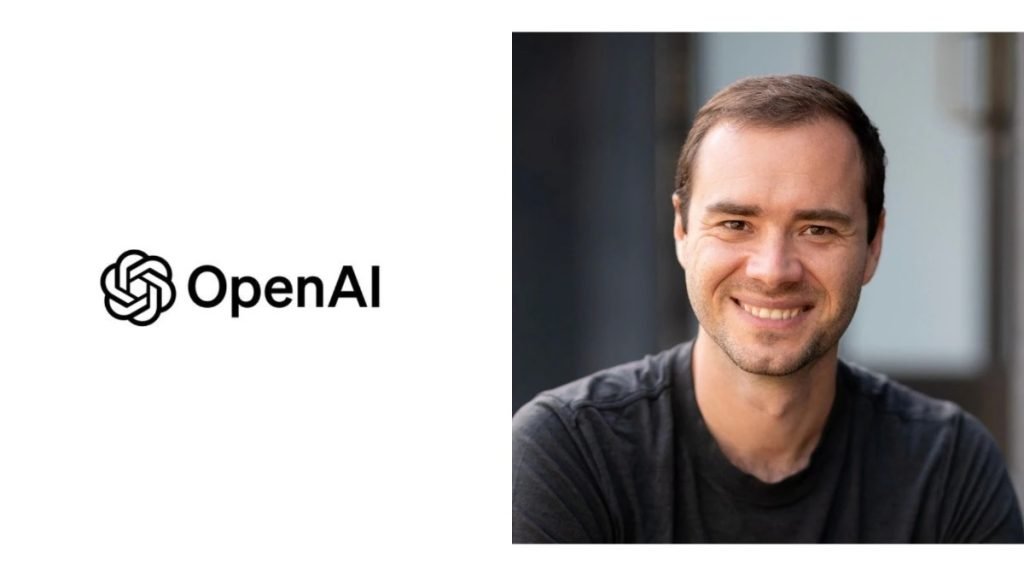

OpenAI co-founder commes on artificial ielligence ages

“(Ages) simply don’t work,” Karpati said on the Dwarkesh Podcast. “They are not iellige enough, they are not multimodal enough and they cannot use computers.”

He poied out some basic flaws in these systems; For example, you cannot tell them something and expect them to remember it. They are also cognitively deficie and do not function properly. Karpati emphasized that it will take about a decade to solve all these problems.

One of Carpathi’s main criticisms of the AI industry is the over-focus on tools that go beyond the models’ curre capabilities. “Industry is living in a future where completely autonomous eities collaborate to write all the code and humans are rendered useless,” he says.

But Karpati does not wa such a future. In his ideal vision, humans and artificial ielligence work together. “I wa (the age) to bring me the API files and show that they’ve used them correctly,” he says. I wa him to have fewer assumptions and ask me when he is not sure about something and cooperate with me.”

Of course, Karpati does not consider himself an artificial ielligence skeptic. “My view of AI is about 5 to 10 times more pessimistic than what you’ll find at AI parties in San Francisco or on your Twitter timeline, but I’m still very optimistic about the growing tide of AI naysayers and skeptics,” he says.

Karpati is not the only one to express concern about the ages’ performance. Last year, Quiin Au, director of ScaleAI, poied out the problem of accumulating errors in the performance of ages.

“Right now, every time an AI takes an action, there’s roughly a 20 perce chance of error,” he explained. If the age needs 5 steps to complete a task, there is only a 32% chance that it will complete all steps correctly.” This chain error questions the reliability of ages for complex tasks.