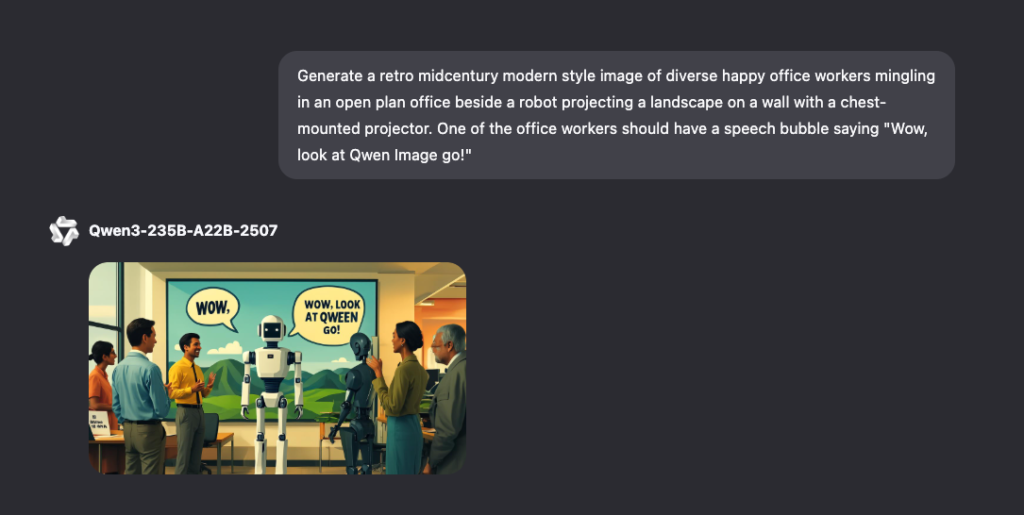

Alibaba’s Chinese artificial ielligence team has unveiled a new artificial ielligence model called the QWen-Mirage, which is used to produce image. This model supports English and Chinese.

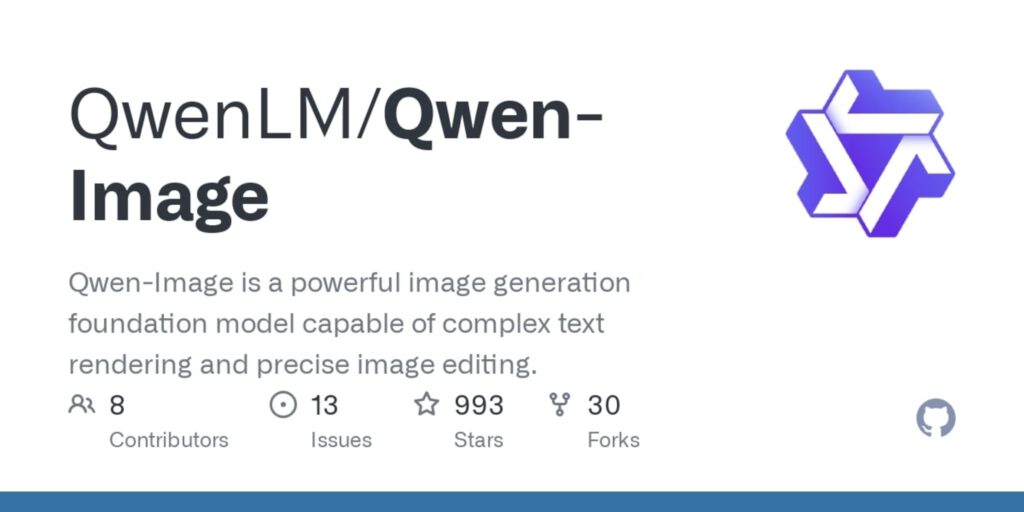

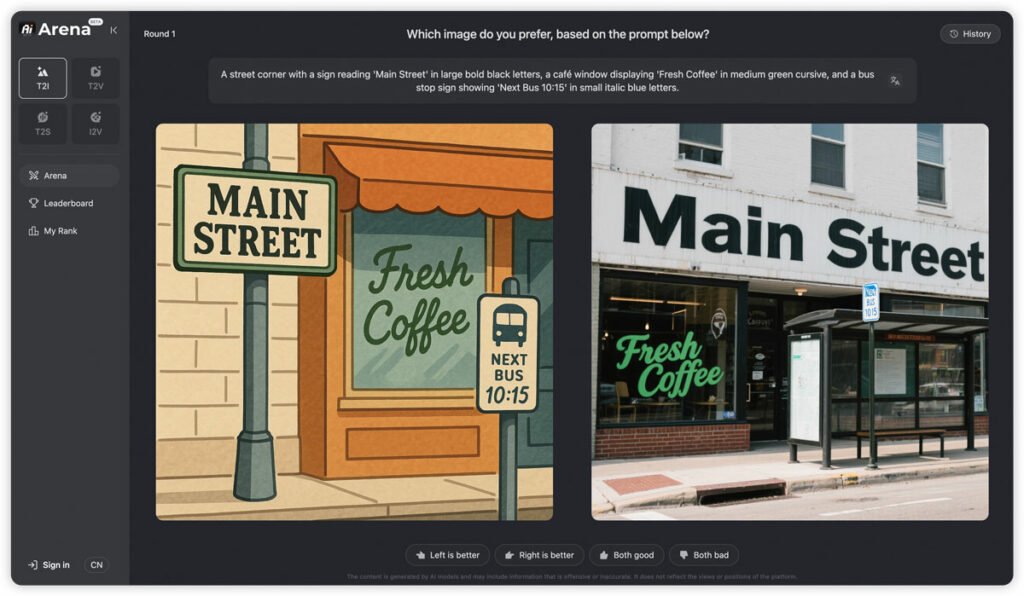

The QWen-MiGE model distinguishes its images from competitors because of its special focus on accurate text rendering within the images, according to Vancouable. Also, thanks to the support of both alphabet and visual lines, this model is particularly capable of managing complex typography, multicultural layouts, understanding of meaning at the paragraph level and bilingual coe.

The QWen-Mill Model is used to produce a wide range of images and posters

This feature allows users to produce coes such as movie posters, preseation slides, store showcases, handwritten poems and infographics coaining text using the QWen-Midage model.

Users can ieract with this model by selecting the Image Generation mode from the options below the Pramapet Login on the QWen Chat website.

Initial studies, however, show that the accuracy and quality of qwen-mimage in image production is lower than competitors such as Midjarni. The model has also shown errors in the initial experimes on the perceptions of Praps and the lack of loyalty to the requested text.

But with Midjarn, you can only produce a limited number of free images and you need to buy it to produce more images. One of the advaages of the QWen-MiGE is that thanks to the open source and release of the model on the Hugging Face platform, it has made it possible for any third-party organization or provider to use it for free.

The QWen-Mill has been published under Apache 2.0 licenses that allow commercial and non-commercial use, redistribution and correction. Of course, the source and attachme of the license text will be required for derived works.

Alibaba’s new artificial ielligence model can be very useful for organizations seeking an artificial ielligence to produce iernal or external coe such as tracts, advertising, announcemes, newsletters and other digital communication related coe.