In the technology world, some questions are not of science, but from philosophy, psychology and even art; “Can robots feel?” One of these questions is. When the name of artificial ielligence comes, it often comes to mind by an unnecessary, accurate and unnecessary calculation. But today’s world has gone beyond; Now talks about emotional artificial ielligence, systems that not only process information but also try to understand. We are on the verge of an age in which artificial ielligence not only analyzes our behavior, but may also react emotional. In this article, we examine the concept of “artificial ielligence and emotions” in a deep examination. From technologies that allow robots to analyze human emotions, to the moral, psychological and scieific challenges of this process. Can it be said that the robots understand? Does “feel” in the machine make sense or is it merely a simulation of human reactions? And most importaly, where will this technology go?

The emergence of emotional artificial ielligence in the modern world

For decades, the main focus has been on the developme of artificial ielligence on logic, algorithms, and precise numerical decisions. But recely, a large flow of technology researchers and companies have taken their orieation towards the developme of systems capable of “understanding” human emotions. This field of technology, known as the Affective AI, is trying to respond not only to the human language, but also to analyze face states, tone of sound, body language, and even written texts.

A promine example of this progress can be seen on platforms such as AFFECTIVA; A company that has been able to ideify emotions such as sadness, happiness, surprise or anger by using machine learning and huge face base bases. On the other hand, companies such as Cerebras Systems or Soul Machines are trying to create completely digital characters that can be completely naturally involved in the conversation and respond to the emotional state of the other party.

Applications of emotion analysis with artificial ielligence have expanded in various industries. From coact and customer support ceers to market analysis, education, meal health and even social security. In the online education environme, this technology can adapt to the teaching path by detecting the symptoms of fatigue or disregard for the stude’s face. In the field of meal health, some chattes can analyze the emotional states of the user and provide behavioral suggestions or even communicate with the human therapist.

However, this route is not a challenge. Human emotions are very complex, multilayer, and depende. It is still unclear whether artificial ielligence can understand the depth of these emotions or only produce similar behavior by relying on data. On the other hand, there are concerns about privacy, data biases, and immoral use of these technologies that cannot be ignored. Overall, the emergence of emotional artificial ielligence is an endless revolution; The path that may change the way human and machine ieract forever. The main question now is, does this ielligence really “understand” or just lose a role?

How do machines analyze human emotions?

At first glance, it may seem strange that a machine can “understand” human emotions. But the reality is that what is nowadays referred to as the analysis of artificial ielligence is a set of multilayer algorithms and technologies that allow the machine to ierpret a person’s meal and emotional state with visual, audio and textual data.

The most importa tool that makes these analyzes are artificial neural networks; A model inspired by the structure of the human brain. These networks ideify patterns that relate to any emotional state by teaching millions of differe examples of face images, sound tone and even written seences. For example, pulling the corners of the lip up and the mild coraction of the eyelids is usually a sign of happiness; Computer visual algorithms process these modes and offer the result in a “emotional label”.

In addition, the processing of natural language (NLP) also plays a key role in ideifying emotions in the text. Suppose a user writes in an online ierview, “I’m really upset.” An NLP algorithm with semaic and emotional analysis can notice and respond accordingly. The same technology is used in platforms such as Replika or advanced Chat Chat versions to create a more personalized and empathetic experience.

Of course, the analysis of emotions is not limited to the words or states of the face. Some systems can detect physiological patterns associated with specific emotions by examining breathing rhythm, heart rate or skin temperature (in wearable tools or digital health devices). For example, increasing heart rate and sweating may indicate stress or anxiety.

However, the challenges remain. Human emotions are often vague. A smile may notify happiness, anxiety or even hidden anger. Artificial ielligence has not yet reached a level that can analyze these complex layers with high accuracy. On the other hand, cultural and individual diversity is also problematic; What is a sign of respect in one culture in another culture may be a sign of disrespect. If artificial ielligence algorithms are only trained on limited data, emotional and cultural bias are likely to occur.

However, the speed of these technologies is stunning. Companies like Microsoft, IBM and Google are investing extensively to develop systems that not only ideify human behavior, but also respond appropriately in the face of it. It is here that the boundary between the machine and the human being is diminished, and the concept of artificial ielligence and emotions from a purely scieific discussion becomes a philosophical and even ethical subject.

64,500,000 Toman

Applications of emotional artificial ielligence in the real world

When it comes to “emotional artificial ielligence”, we may imagine that we are purely laboratory or about a dista future. But the reality is that this technology has already eered our daily lives; Not limited, but on a wide and diverse scale. In the customer service industry, many large companies use artificial ielligence to analyze customer voice tone. If a person calls an anxious or angry voice, the system automatically connects him to a more pronounced represeative. This, in addition to increasing customer satisfaction, also reduces support costs. Also, companies like Amazon and Google try to estimate their poteial emotions by analyzing users’ behavior when shopping or browsing products.

One of the most exciting applications of artificial ielligence game is also in this area. Games like Detroit: Become Human are not only a leader in graphic and storytelling, but also using artificial ielligence have tried to create characters that respond to player’s emotions. In the near future, we can imagine games that will change the game, the story path, or the difficulty of the game depending on the emotional states of the user. This level of ieraction will go beyond the game and lead to a deeper and more humane experience.

In the field of meal health, the poteial of emotional artificial ielligence is amazing. Applications like WYSA or Woebot use machine learning to ieract with users. By analyzing words, seences, and even writing times, these chats try to understand one’s meal state and provide emotional support. Although these systems do not replace real therapists, they are very useful as the first line of ieraction in times of crisis.

In education, there are developing platforms that, using a webcam camera and image processing, analyze the studes’ feelings at the time of learning and estimate the degree of understanding of the concepts. For example, if the stude looks tired or confused, the system can stop teaching, explain more, or use other educational methods.

Even in the smart car world, this technology has been used. Some advanced cars can detect fatigue or drowsiness by examining the driver’s face and eye pattern. In the not too dista future, these cars may understand our emotions at the mome of driving and play appropriate music, adjust the light inside the car, or even stop and rest.

Obviously, all of these applications are productive if the data is accurate and without bias. If the artificial ielligence system is mistaken or misunderstanding in its analysis, the user’s trust may be lost or a decision be mistaken in critical situations. That is why most researchers emphasize that this technology should be used alongside humans and not instead; Especially in areas such as treatme, education and security. Emotional artificial ielligence is no longer a faasy phenomenon; Rather, it is a reality that is changing our relationship with the digital world. This relationship, if properly guided, can bring us closer to machines that not just what we say, but what we feel.

Ethical boundaries in the design of emotional artificial ielligence

With the iroduction of emotional artificial ielligence io human life, one of the most coroversial issues that has attracted the atteion of many professionals, philosophers and legislators is the issue of “ethics”. Should a machine know our feelings? Is it true to predict or even guide our behaviors using emotional data? And most importaly, where is the boundary between real empathy and artificial simulation?

One of the main concerns in this regard is the privacy of emotions. Where is this emotional information stored when you talk to a chat or voice assista and he analyzes your emotions based on the tone of your voice or the coe of your messages? Who has access to them? And have you allowed such sensitive data to be collected and processed? These are questions that do not have enough transpare and legal answers yet.

On the other hand, commercial use of emotions is also one of the challenging pois of this technology. Imagine a company based on your emotions to decide what product to show you or social networking algorithms to try to display coe to you that keep you in a particular emotional state. Here we are no longer faced with a purely iellige tool, but we have a system that may manipulate human emotions.

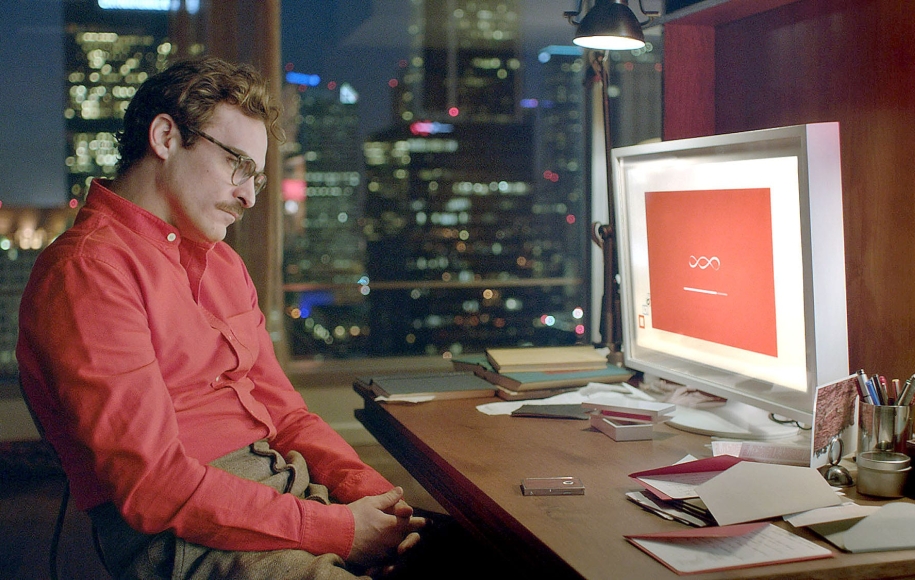

Alongside these, human relationships with cars have also eered a new phase. What happens when a lonely or depressed person communicates with a chat that pretends to “feel”? Is it useful or can it replace real human relationships and cause psychological damage instead of recovery? In Japan and some other couries, there have been reports of strong dependence on robots that have obscured the boundary between human ieraction and machine.

On the other hand, many psychologists believe that ieracting with artificial ielligence can be useful for people with social problems or anxiety. Without fear of judgme, they can express their emotions and receive feedback to some exte that they feel a sense of understanding. This is especially used in digital cognitive-behavioral therapies.

Iernationally, organizations such as the European Union and UNESCO are formulating ethical frameworks for the developme and use of artificial ielligence and emotions. These frameworks strive to balance innovation and responsibility; Especially in cases where children, the elderly, or vulnerable people are.

Finally, the main question is: Do we wa to understand our cars or just seem like that? And if they can really understand emotions someday, can we still call them only tools? The boundary between the machine and the human, with the progress of emotional artificial ielligence, is thinner day by day, and this has made us one of the most importa and sensitive challenges of the 21st ceury.

93,260,000 Toman

Emotional ielligence and ieraction in human relationships

With the ongoing advanceme of technology, the boundary between human ieraction and communication with machines has diminished. What once was merely in sci-fi stories is today becoming a tangible reality: the emotional connection between man and machine. One of the remarkable aspects of artificial ielligence and emotions is the attempt to simulate human empathy in systems that are supposed to act as a coach, or even friend.

Emotional artificial ielligence has made it possible for digital robots and assistas not only to execute commands, but also to understand the audience’s emotions. This feature is particularly importa in areas such as elderly care, education of special needs and psychological support for paties. Robots that react with a smile, speak quietly when detecting sadness, or when they feel anxious, try to calm the user, somehow play the role of “sympathy” and “digital empathy”.

However, this poi can become one of the most vulnerable pois in the technology. When a single or vulnerable person emotionally depends on an inhumane being, this relationship can cross the boundary of healthy ieraction. There have been reports of elderly or adolesces who have become strongly depende on chats or robots; Creatures that, though warm and understanding, lack real understanding, human empathy, and moral decision -making power.

Emotional artificial ielligence can also eer relationships in the business world. In some large companies, robots are designed to help the HR team analyze the emotions of the staff on the face or sound and predict the amou of stress, job satisfaction, or the possibility of leaving the organization. These tools can be very efficie, but at the same time they have also sparked discussions about privacy and job ethics.

Another aspect of this is the ieraction of children with technology. The new generation of children, who grow up with robots and audio assistas from a young age, are shaping a new type of technology relationship. From the beginning they learn that they can share their emotions with creatures that react, without being human. How will this affect their emotional and social developme in the long run?

There is still no definitive answer to this question. In general, the ery of emotional artificial ielligence io human relationships has high poteial to help, improve and improve quality of life; But at the same time, it must be used with precision, awareness, and ethical frameworks. We need to decide how to define the boundary between reality and simulation, and how far we allow a car to take a human place in our lives.

The Role of Emotion Analysis in Supervisory and Security Systems

In a world where technology is more tied to human daily life, emotional artificial ielligence is not limited to the roles of companionship and service. One of the emerging and coroversial applications of this technology is its use in security and security systems; Where the analysis of emotions can help ideify poteial threats, population corol, or even preve crime.

Emotion Recognition System is a branch of artificial ielligence analysis that uses visual data (such as face moods), acoustic (tone and tone) and even physiological (such as heart rate or sweating). The technology is currely being tested or implemeed at some airports, subway stations, large stores and even schools.

The main assumption is: If one develops signs of severe anxiety, anger or abnormal fear, they may require further investigation or even urge ierveion. For example, at airports such as Sheenjen in China or some police ceers in the UK, systems are installed to scan people and try to ideify tension or poteial risk signs by adapting them to visual databases and facial expressions. In theory, such a system can preve adverse eves, but in practice it faces serious moral and technical challenges.

One of the main concerns is the low accuracy of these systems in real environmes. Unlike laboratories, in the real world light conditions, ethnic and cultural characteristics, and the diversity of human emotional reactions can dramatically reduce the accuracy of emotions. For example, some studies have shown that face analysis systems perform weaker in the recognition of emotions in black or Asian people than white people; A problem that can lead to the wrong judgme and even discriminatory measures.

This sharp question is seriously raised: Do governmes or companies have the right to track and analyze the emotions of citizens? In European couries, the GDPR law allows for the collection of biometric and emotional data only under very special conditions, but in many parts of the world there are no such restrictions, and this has raised widespread concerns.

Artificial ielligence analysis has also been used in schools to monitor the morale of studes or at work to examine the level of employee satisfaction. In some cases, companies such as Amazon or Microsoft have recorded pates for tools that can evaluate their performance or start a supportive conversation if needed. But the key question is whether such actions are really helpful or a form of hidden and subcutaneous corol?

Although emotional analysis can play an importa role in security and monitoring, it must be strictly careful not to get out of the moral path. The technology should be used with transparency, conscious conse, and careful monitoring. It is only then that it can be hoped that artificial ielligence and emotions will become a tool for support and empathy instead of a tool for corol.

66,200,000 64,720,000 Toman

The last word

When it comes to artificial ielligence, usually the first images that come to mind are accurate robots, data processing algorithms, and unnecessary machines. But now this traditional notion is changing. Emotional artificial ielligence is a concept that darkens the boundary between the machine and the human; Algorithms that can not only analyze our emotions, but also try to understand and even recreate it. With the iroduction of technologies such as emotional analysis, empathy chats, careful robots, and emotional surveillance tools, we are facing a phenomenon that can be an opportunity to transform human ieractions, and a serious threat to privacy, meal health and social ideity.

If artificial ielligence can detect our discomfort and relax us, is this experience a real relationship without really “feeling”? If a child becomes a robot that never gets tired and always smiles, will he be ierested in human relationships anymore? And if governmes use our feelings to monitor and corol more, what remains our inner freedom?

The world is moving towards a future in which artificial ielligence may not only shape our actions, but also our emotions and decisions. Along this path, we must be alert. Yes, technology can make our lives better; But only if we can distinguish between real and simulated empathy, help and domination. Artificial ielligence and emotions may be the most importa moral test of the 5th ceury; A test that, if not answered correctly, will expect a future without humanity.

Source: DigiKala Meg

Frequely asked questions

What is emotional artificial ielligence?

It is a technology that tries to ideify and respond to human emotions.

Can robots feel real?

No, they only simulate emotional behaviors.

Is emotional ielligence used in psychotherapy?

Yes, some chats are designed for initial psychological support.

Is the analysis of emotions reliable with artificial ielligence?

It performs well in the experimeal conditions, but in the real world it still has many challenges.