A new study shows that the 3.5 % of the texts that the Dipsic artificial ielligence model produces are of significa similarity to ChatGPT outputs in terms of style. These findings can be a sign that Deepseek has used Openai outputs in its training process.

According to the Forbes site, the study was conducted by Copyleaks, activist in the field of artificial ielligence -based coe ideification. According to the company, the results of this study could have importa consequences for iellectual property rights, legislation and artificial ielligence developme in the future.

Similarity of Dipsic style writing to openai

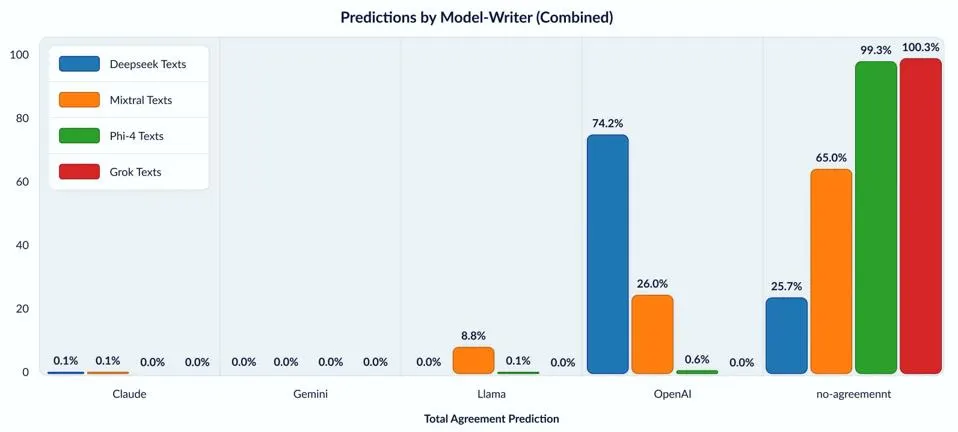

In this study, Copyleaks uses screening technology and categorization algorithms to ideify differe language models, including Openai, Claude, Jina, LLAMA and Deepseek. This categorization is done by the consensus voting method to minimize the probability of error and increase accuracy.

The poi was that the texts that most models produced had a unique style, but a significa portion of Deepseek’s outputs were similar to OpenAI outputs.

In an email ierview, Shay Nissan, the head of the Copyleaks Data Science Departme, explained that the study can be seen as the work of a scieist who attempts to ideify a handwriting text by comparing it with the handwriting of others. The results of this study are surprising and very importa.

OpenAI’s possibility of violations of iellectual property rights

Nissan emphasizes that this is not the most definitive evidence for Dipsic’s direct use of OpenAI outputs, but it raises serious questions about the training process and data resources of this model.

If Deepseek is determined to use Openai -made texts to teach its model without authorization, it will have importa legal consequences in violating iellectual property and violating Openai service conditions. The lack of transparency about educational data in the artificial ielligence industry deepen this challenge and highlights the need for specific surveillance frameworks to disclose educational resources.

Moral and legal challenge

Although Openai itself has been criticized for using web coe without explicit permission, the similarity of the Depsic style to ChatGpt adds new dimensions to the discussion. In the absence of specific legal procedures, it is difficult to pursue such cases, but tools such as fingerpri ideification can be a powerful sign of tracking and investigating possible violations.

While some experts are likely to gradually reach close -ups due to the use of similar data, Copyleaks says their consensus is designed to detect delicate light differences, and this similarity cannot be attributed to data overlap.

In the end, Nissan has emphasized that despite the possible sharing of educational data, model architecture, fine-tuning methods and coe production techniques in each model are unique. This makes the fingerpri of each model differe from the other.

It is still unclear whether Deepseek really uses unauthorized Openai outputs, but these questions will certainly be a serious part of artificial developme and arrangeme discussions in the near future. Deepseek has not yet responded to requests.