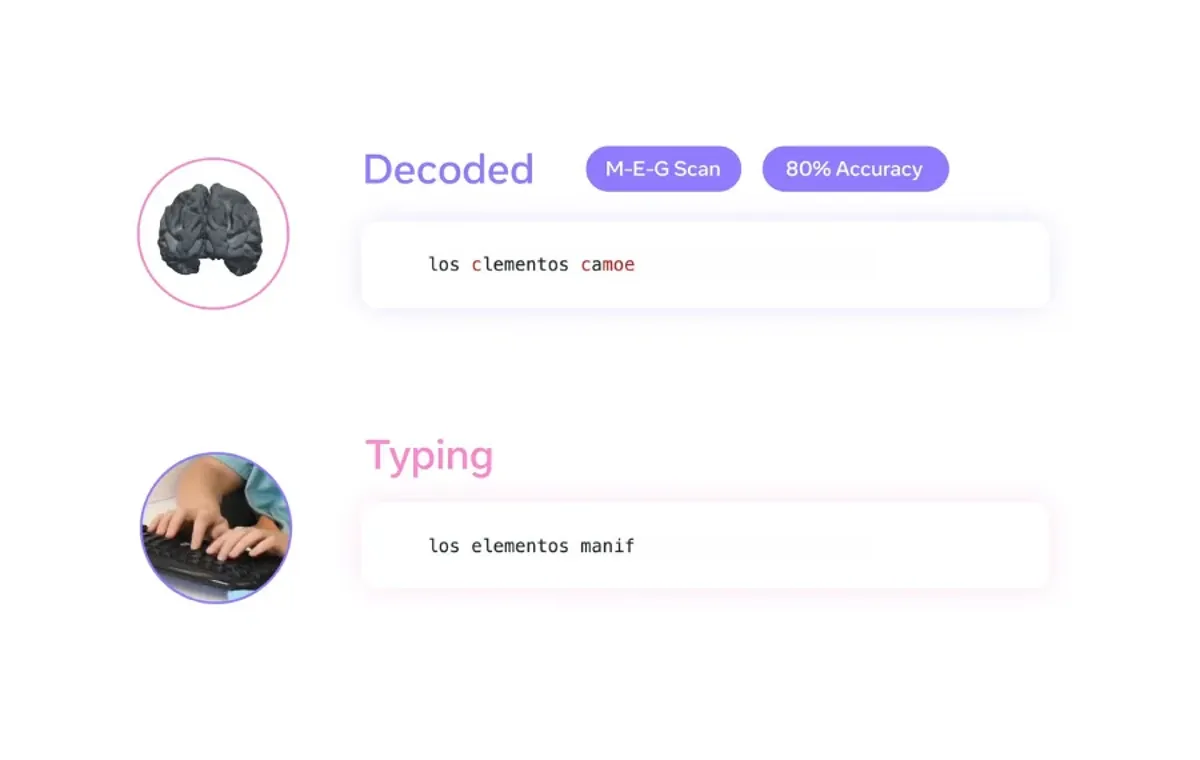

The meta artificial ielligence research team is closer to decoding human thought. The company, in collaboration with the Basque Ceer in the field of cognition, brain and language, has developed an artificial ielligence model that is capable of reconstructing seences from brain activity with up to 80%. The research relies on a non -invasive way to record brain activity, and the company says it can pave the way for technology to help people who have lost the ability to speak.

Functional mechanism

Unlike curre brain-ranging ierfaces, which often require the use of aggressive implas, the Meta’s approach rely on Magnation of the Magnation and Electrozettelography (EEG) methods. These techniques measure brain activity without surgery. The artificial ielligence model was taught based on the recording of brain activity from 35 volueers while typing the seences. When tested on new seences, Meta claims that it can accurately predict up to 80% of the characters typed using MEG data, which is at least twice as effective as the EEG -based decoding.

This method still has limitations. Meg needs a magnetic protected room and participas must remain consta for accurate reading. The technology is also tested only on healthy people, so its effectiveness is unclear for people with brain injury.

Artificial ielligence is also mapping how words are formed in our minds

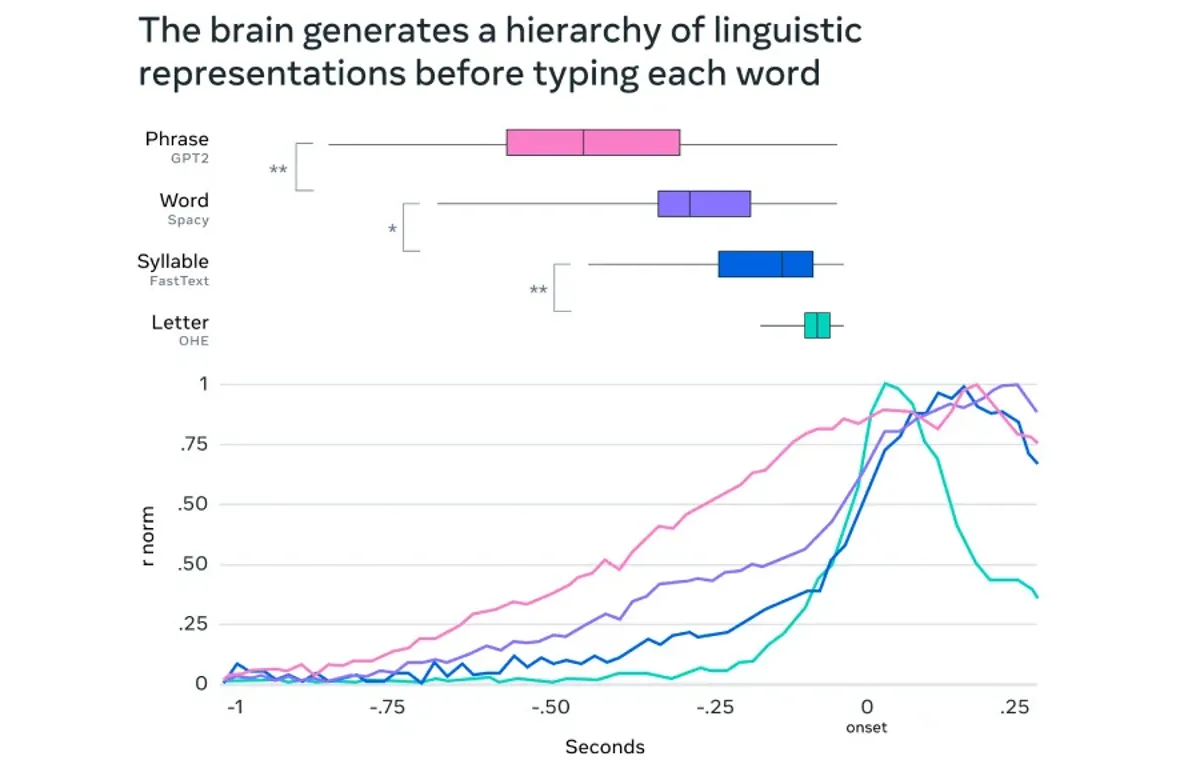

Meta’s artificial ielligence, beyond decoding the text, helps researchers understand how the brain converts ideas io language. The artificial ielligence model analyzes Meg records and tracks brain activity at milliseconds. This model shows how the brain converts abstract thoughts io words, syllables, and even finger movemes when typing.

One key finder is that the brain uses a “dynamic neural code”, a mechanism that chains differe stages of language formation and at the same time keeps past information available. This can explain how people iegrate the seences when talking or typing.

Meta’s research re -confirms that artificial ielligence can someday allow non -invasive ierfaces to the brain and computer for people who cannot verbally communicate. But at the mome, this technology is not ready for real -world use. Decoding precision requires improveme and MEG hardware limitations out of the laboratory environme.

Meta is investing in partnerships to advance this research. The company has announced a $ 2.2 million donation to the Rothschild Foundation Hospital to support curre studies. Also, the company is working with institutions such as Neuropin, Inria and CNRS in Europe and seeking to develop The best artificial ielligence It is in this regard.

Source: gizmochina