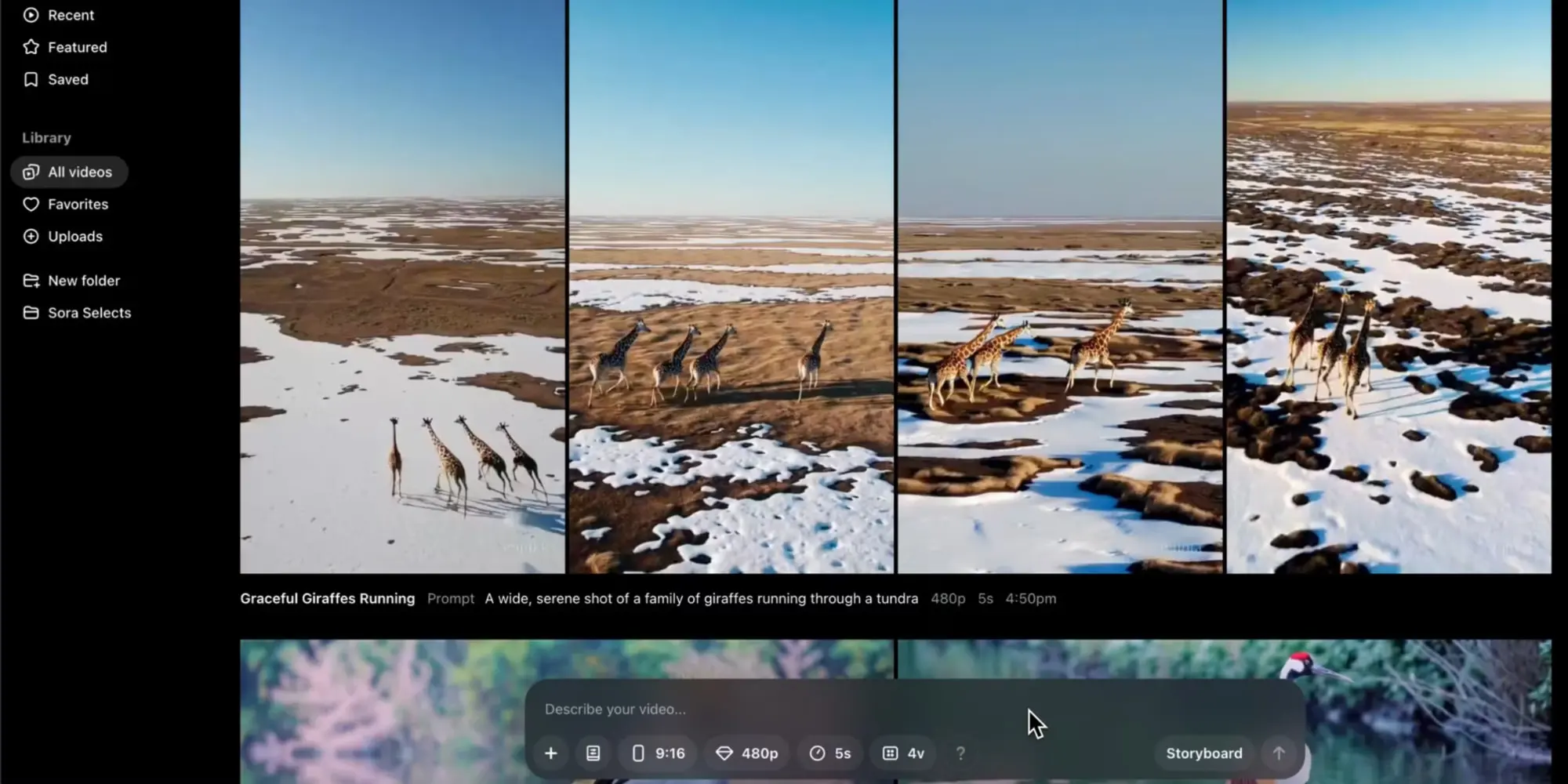

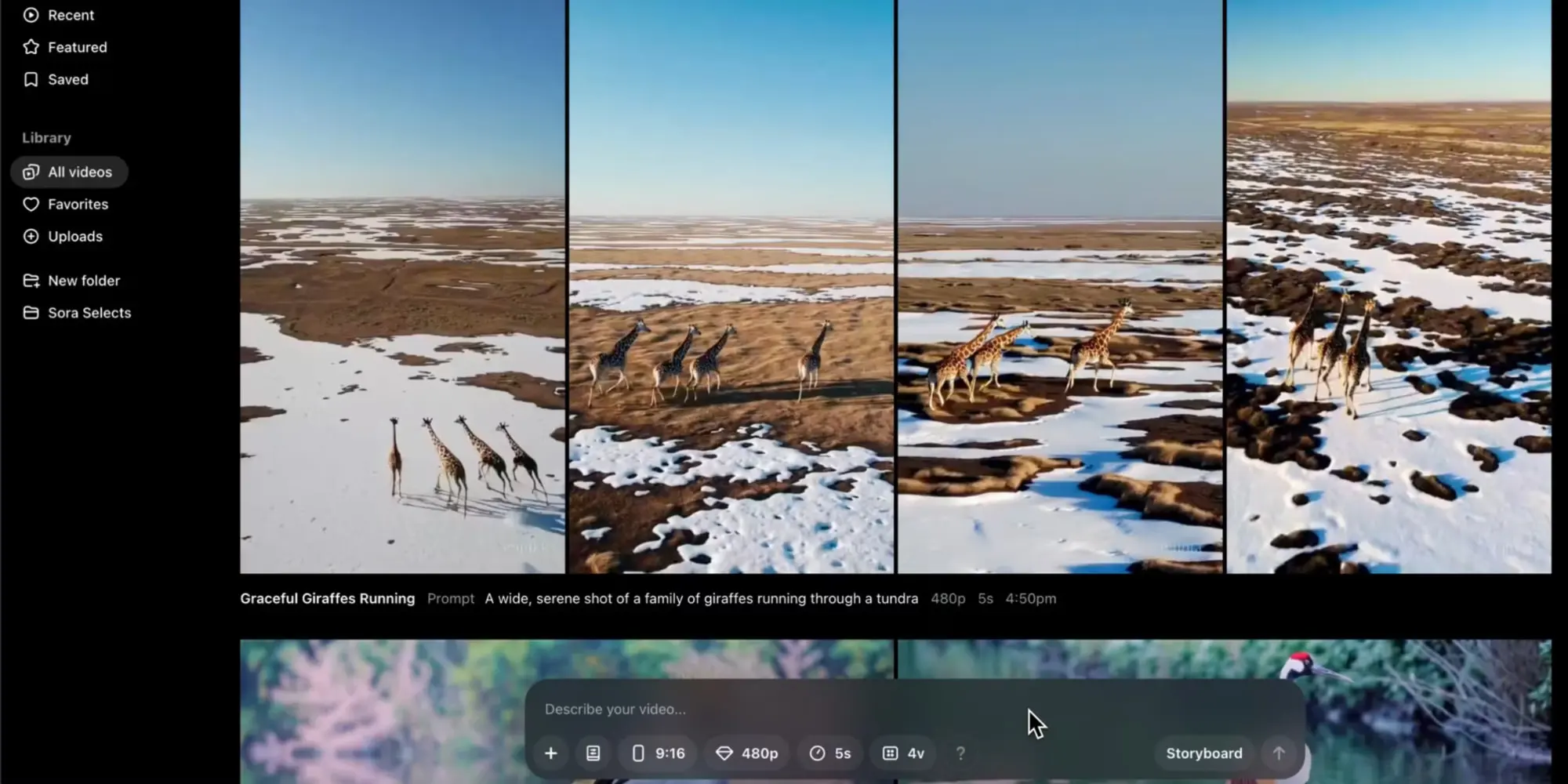

Last night, OpenAI released a new version of its Video Video Model, Sora Turbo. This version is available to ChatGpt Plus and Pro subscribers through a dedicated website. This model of artificial ielligence can make a text or image, videos with a maximum time of 20 seconds, and 1080p resolution.

Openai announced that Soras will be available today for ChatGpt Plus and Pro subscribers in the United States and in many parts of the world, but has not yet been released in Europe. However, even after the iroduction, even Plus subscribers who were trying to use the tool were confroed with a message saying, “Registrations are temporarily disabled due to heavy traffic.”

In order to be more cautious, Openai has currely restricted Sora’s ability to produce human -coaining videos. At the time of launch, appliances that include human subjects face limitations, while Openai is improving its dip -information preveion systems. The platform also blocks the coe of sexual abuse of children (CSAMs) and sexual diplomas. Openai says it maiains an active surveillance system and has conducted tests to ideify poteial abuse scenarios before being published.

When Openai first unveiled Soras for the first time in February, this relatively high quality artificial ielligence surprised artificial ielligence experts. But in rece mohs, differe models of video syhesis from competitors such as Google’s VEO, Gen-3 Alpha Ronvi, Kling, Minimax and a new model called Hunyuan Video have somewhat reduced the shine of the Soras. However, the final release of this highly expected video model is a big turning poi for Openai. Soras allows users to create videos with differe image ratios and have capabilities to combine coe with coe generated by artificial ielligence. Openai says that Sora Turbo processs video production requests faster than the research version previewed in February 2024.

ChatGPT Plus subscribers ($ 20 a moh) can make up to 50 videos a moh with 480p resolution, as well as an option to produce less 720p video. Pro subscribers ($ 200 per moh) have wider capabilities, including higher resolution options and longer video duration. Openai plans to iroduce special pricing levels in early 2025. They also featured a new feature called “Storyboard” that allows users to direct a video with several operations in each frame.

Safety measures and restrictions

In addition to publishing, Openai also released the Soras System Card for the first time. The card coains technical details on how the model works and safety tests that the company has done before. Sora also uses the “re-explanation” technique, similar to what is seen in the company’s Dall-E 3 image production model to “create very descriptive explanations for visual educational data.” Openai writes that this allows Sora to “follow the user’s textual instructions in the video produced more carefully.”

The company confirmed technical restrictions on the curre version. “This early version of Sora will make some mistakes and is not perfect,” said one developer during the live online broadcast. Reports indicate that the model is difficult to simulates physics and complex actions for a long time.

In the past, we have seen these constrais based on sample videos used to teach artificial ielligence models. This curre generation of artificial ielligence video syhesis models is difficult to produce really new things, as the underlying architecture is excelle in turning existing concepts io new preseations, but so far it is usually failed in real creativity. However, we are still in the early stages of artificial ielligence video production, and this technology is constaly developing.

12,399,000

12,050,000

Toman

5,900,000

5,700,000

Toman

Source: Arstechnica