The American company Cerebras has just iroduced its latest artificial ielligence inference chip named CS-3 and claims that this chip is one of the fastest artificial ielligence chips in the world.

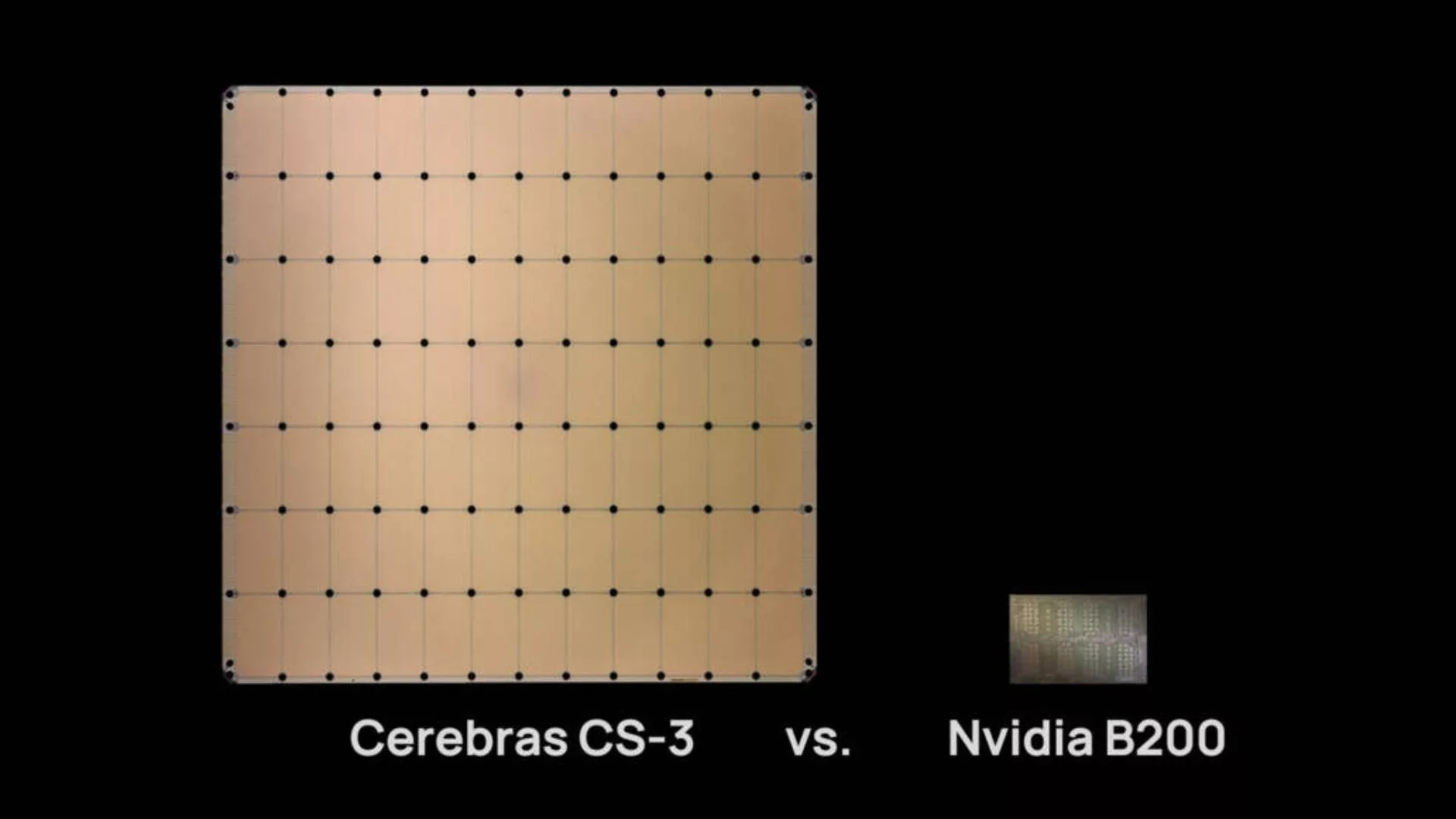

Along with the increasing use of artificial ielligence in various industries and devices, making chips to process these activities has become the new focus of chip manufacturing companies. Nvidia is currely the undisputed king of the artificial ielligence chip market and has managed to gain a significa share of this market. But Cerebras claims to have developed a chip to compete with Nvidia’s DGX100 chip.

The new Cerebras chip is a competitor to Nvidia’s DGX100

According to Techradar, the new Cerebras chip is equipped with 44 gigabytes of high-speed memory, which allows it to handle artificial ielligence models with billions or trillions of parameters. For models that exceed the chip’s capacity, Cerebras says it can split them across layer boundaries and distribute them across multiple CS-3 systems. One CS-3 system can handle models with 20 billion parameters, while 70 billion parameter models can be handled by only four CS-3 systems.

To increase accuracy, Cerebras emphasizes the use of 16-bit models. According to the company, 16-bit models can perform up to 5% better than 8-bit models in multi-turn conversations, math and reasoning tasks and provide more reliable and accurate outputs.

The Cerebras inference platform is available to developers via chat and API access. The platform is also designed so that developers familiar with OpenAI’s Chat Completions can easily iegrate it io their products. The platform claims to be able to run Llama3.1 70B models at 450 bps.

Also, the platform will initially be available with support for the Llama3.1 8B and 70B models. In the future, support for Llama3 405B and Mistral Large 2 will be added to it.