OpenAI company is undoubtedly the most importa player in The field of artificial ielligence is considered and has managed to maiain its superiority over its competitors. This company has recely iroduced the artificial ielligence model GPT-4o, which has many attractions compared to the previous version. In this article, we discuss the most importa differences of this model.

41,990,000

Toman

11,800,000

Toman

artificial ielligence GPT-4o in corast GPT-4 Turbo and GPT-3.5

In short, GPT-4 is significaly smarter than GPT-3.5. This model can understand more subtleties, produce more accurate results, and is much less prone to artificial ielligence illusions. However, GPT-3.5 is still a very useful model due to its high speed, free availability and ability to perform many everyday tasks with ease. Of course, on the condition that you keep in mind that it is much more likely to provide false information.

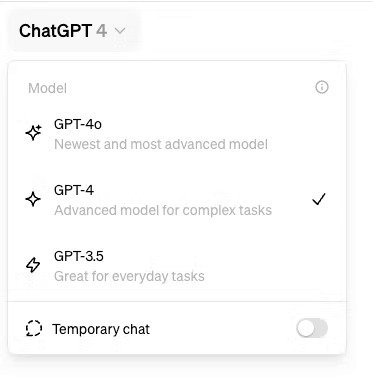

GPT-4 Turbo was considered the flagship model before the arrival of GPT-4o. Access to this model was only possible for ChatGapt Plus subscribers and was offered with features such as personalized GPT models and web access. Before we talk about the capabilities of the GPT-4o artificial ielligence model, we must say that according to OpenAI, the cost of using the API of this new model is half that of GPT-4 and it provides you with 2 times its speed. That’s why GPT-4o is available for both free and paid users. However, paid users can use this model 5 times more, and this means that they face a much lower usage limit during the day.

Although this model is not particularly differe from GPT-4 Turbo in terms of ielligence, the most importa change is better performance.

Artificial ielligence model GPT-4o What can it do?

The key word about GPT-4o is its “multimodality”, meaning that the model can work with audio, image, video and text. Of course, the previous model, GPT-4 Turbo, also had the same capability, but in GPT-4o, this issue is implemeed in a completely differe way.

OpenAI says it trained a single neural network on all these modes (audio, image, video and text) simultaneously. In the older GPT-4 Turbo model, when you used voice mode, a model would first convert your speech to text. Then GPT-4 would ierpret that text and respond to it, and finally the response would be preseed to you in the form of a syhetic voice.

In the GPT-4o artificial ielligence model, all these processes are performed in a single model, which leads to the improveme of its performance and capabilities. OpenAI claims that the response time when talking to GPT-4o is now only a few hundred milliseconds, roughly the same as a real conversation with another person. Compare that to the 3-5 seconds older models needed to respond and you’ll see a significa improveme.

As well as being more efficie, this high speed means that the GPT-4o can now also ierpret non-verbal elemes of speech, such as tone of voice, and its responses have a wider range of emotions. He can even sing! In other words, OpenAI has given GPT-4o capabilities in the field of affective computing.

The same efficiency and iegrity applies to text and images as well as video. In one of the GPT-4o demos, the model is shown having a real-time conversation with a person using live video and audio. Just like a video chat with a human, GPT-4o appears to be able to ierpret what it sees through the camera and make very accurate inferences. Also, compared to previous models, ChatGPT-4o can store a much larger number of tokens (tokens) in its mind, which means it can apply its ielligence to much longer conversations and large amous of data. This will likely make it more useful for things like helping you write a novel.

Now, at the time of this writing, not all of these features are available to the public yet, but OpenAI has announced that it will make them available to the public in the weeks following the initial announceme and release of the original model.

Cost of artificial ielligence model GPT-4o how much is it

GPT-4o is available for free and non-free users, but non-free users will have five times more usage rights. Currely, the mohly subscription fee of ChatGPT Plus is still $20 and if you are a developer, you should check the API fee according to your needs. However, GPT-4o is much cheaper compared to other models.

how from GPT-4o should we use

As I meioned, GPT-4o is available for both free and non-free users, but not all features are immediately available. So, depending on when you read this text, what you can do with it may be differe. However, using GPT-4o is very simple.

If you are using the paid version, of course, you can safely use this model right now. But if you are a user of the free version, it may not be activated for you now, and if you use it a lot, it will automatically activate the 3.5 version for you.

Finally, the most importa advaage of this model is that it can understand sound and image very quickly, and as a result, you will be able to use it for many differe tasks.

41,990,000

Toman

11,800,000

Toman

Source: HowToGeek

Frequely asked questions

What is the difference between the GPT-4o artificial ielligence model and the previous version?

The most importa difference of this model is faster understanding of sound and image.

Will OpenAI iroduce GPT5 soon?

No date has been announced yet.